Abstract

Background and Purpose: The present age of digitalization brings with it progress and new possibilities for health care in general and clinical psychology/psychotherapy in particular. Internet- and mobile-based interventions (IMIs) have often been evaluated. A fully automated version of IMIs are chatbots. Chatbots are automated computer programs that are able to hold, e.g., a script-based conversation with a human being. Chatbots could contribute to the extension of health care services. The aim of this review is to conceptualize the scope and to work out the current state of the art of chatbots fostering mental health. Methods: The present article is a scoping review on chatbots in clinical psychology and psychotherapy. Studies that utilized chatbots to foster mental health were included. Results: The technology of chatbots is still experimental in nature. Studies are most often pilot studies by nature. The field lacks high-quality evidence derived from randomized controlled studies. Results with regard to practicability, feasibility, and acceptance of chatbots to foster mental health are promising but not yet directly transferable to psychotherapeutic contexts. Discussion: The rapidly increasing research on chatbots in the field of clinical psychology and psychotherapy requires corrective measures. Issues like effectiveness, sustainability, and especially safety and subsequent tests of technology are elements that should be instituted as a corrective for future funding programs of chatbots in clinical psychology and psychotherapy.

Zusammenfassung

Hintergrund und Ziel: Das gegenwärtige Zeitalter der Digitalisierung bringt Fortschritte und neue Möglichkeiten in der Gesundheitsversorgung im Allgemeinen und im Besonderen in der klinischen Psychologie und Psychotherapie mit sich. Internet- und mobilbasierte Interventionen (IMIs) sind inzwischen vielfach evaluiert. Eine vollständig automatisierte Form von IMIs stellen Chatbots dar. Chatbots sind automatisierte Computerprogramme, die z.B. eine skriptbasierte Konversation mit einem Menschen führen. Chatbots könnten zukünftig dazu beitragen, gesundheitsbezogene Versorgungsangebote zu erweitern. Ziel dieser Übersichtsarbeit ist die Konzeptualisierung des Gegenstandsbereichs und die Erarbeitung der Evidenzlage bezüglich Chatbots zur Förderung mentaler Gesundheit. Methoden: Der vorliegende Beitrag stellt ein Scoping-Review zum Gegenstandsbereich von Chatbots in der klinischen Psychologie und Psychotherapie dar. Eingeschlossen wurden Studien, die die Verwendung von Chatbots mit dem Ziel der Förderung mentaler Gesundheit untersuchen. Ergebnisse: Die Technologie der Chatbots ist noch als experimentell zu bezeichnen. Studien haben vorwiegend Pilotstudiencharakter. Es fehlt an qualitativ hochwertigen randomisiert-kontrollierten Studien. Die Ergebnisse der bisherigen Forschung im Hinblick auf die Praktikabilität, Durchführbarkeit und Akzeptanz von Chatbots zur Förderung mentaler Gesundheit sind zwar vielversprechend, jedoch derzeit noch nicht unmittelbar auf den psychotherapeutischen Kontext übertragbar. Diskussion:Themen wie Wirksamkeit, Nachhaltigkeit und insbesondere Sicherheit sowie Technologiefolgeuntersuchungen sind Bestandteile, die vor dem Hintergrund der wachsenden Forschungsaktivitäten im Bereich Chatbots als Korrektiv ein fester Bestandteil kommender wissenschaftlicher Förderprogramme werden sollten.

SchlüsselwörterSoftware-Agent, Chatbot, Klinische Psychologie, Psychotherapie, Gesprächsbot

Chatbots in Clinical Psychology and Psychotherapy to Promote Mental Health: A Scoping Review

We find ourselves in the age of digitization, an age in which our work, the economy, and science are using increasingly intelligent machines that make our everyday life easier, including our private lives [World Economic Forum, 2018]. The Federal Ministry of Education and Research has declared artificial intelligence (AI) as one of the key technologies of the future and the theme of science year 2019 [Bundesministerium für Bildung und Forschung, 2018]. AI systems are technical learning systems that can process problems and adapt to changing conditions. They are already being used for road traffic control and to support emergency workers [Bundesministerium für Bildung und Forschung, 2018]. New possibilities are also being created by AI in the clinical/medical context. User data can be linked with up-to-date research data, which could improve medical diagnoses and treatments [Salathé et al., 2018]. Digitization trends will also affect clinical psychology and psychotherapy, such as chatbots that could be used increasingly and gain importance as next generation of psychological interventions. In this context, chatbots are programs that hold conversations with users – in the first instance technically based on scripts (plots that steer the conversation), which should be created by psychotherapists [Dowling and Rickwood, 2013; Becker, 2018]. Such chatbots are currently closer to full-text search engines than to stand-alone AI systems [Yuan, 2018].

In Germany, only a small proportion of people who are diagnosed with a mental disorder have contact with the health care system with regard to their mental health problems within 1 year [Jacobi et al., 2014]. Those affected do not take advantage of the available services for various reasons [Andrade et al., 2014]. Among these are worry about stigmatization [Barney et al., 2006], limitations of time or location [Paganini et al., 2016], negative attitudes towards pharmacological and psychotherapeutic treatment options [Baumeister, 2012], negative experiences with professional caregivers [Rickwood et al., 2007], and lack of insight into their illness [Zobel and Meyer, 2018]. Psychological Internet interventions represent an innovative potential that has resulted from progress in digitization. Barak et al. [2009] define a web-based intervention as “a primarily self-guided intervention program that is executed by means of a prescriptive online program operated through a website and used by consumers seeking health- and mental-health-related assistance” [Barak et al., 2009; p. 5]. Psychological Internet interventions have frequently been evaluated and are viewed as a medium independent of time and place [Carl-bring et al., 2018; Ebert et al., 2018]. They might be able to help reduce treatment barriers and expand the availability of care [Baumeister et al., 2018; Carlbring et al., 2018; Ebert et al., 2018]. Numerous studies have shown that these interventions, often using cognitive-behavioral techniques, are comparable in their effectiveness to classical face-to-face psychotherapy [Andersson et al., 2014; Carlbring et al., 2018]. Psychological problems such as anxiety and depression are already being effectively addressed in this way [Andersson et al., 2014, 2016; Carlbring et al., 2018]. When comparing the effectiveness of classical psychotherapy with Internet- and mobile-based interventions (IMIs), it should be considered that interindividual differences, for example with regard to openness to new experiences, could affect whether and how strongly people benefit from the different forms of presentation (online or classical) [Andersson et al., 2016; Carlbring et al., 2018].

Chatbots are an innovative variant of psychological IMI with the potential to effect lasting change in psychotherapeutic care, but also with substantial ethical, legal (data protection), and social implications. The key objectives of the present review are:

conceptualizing the scope of chatbots in clinical psychology and psychotherapy;

working through the evidence about chatbots in promoting mental health; and

presenting the opportunities, limits, risks, and challenges of chatbots in clinical psychology and psychotherapy.

Conceptualizing the Scope

Chatbots are computer programs that hold a text- or speech-based dialogue with people through an interactive interface. Users thus have a conversation with a technical system [Abdul-Kader and Woods, 2015]. The program of the chatbot can imitate a therapeutic conversational style, enabling an interaction similar to a therapeutic conversation [Fitzpatrick et al., 2017]. The chatbot interacts with the user fully automatically [Abdul-Kader and Woods, 2015].

Chatbots are a special kind of human-machine interface that provides users with chat-based access to functions and data of the application itself (e.g., Internet interventions). They are currently used mainly for customer communication in online shopping [Storp, 2002], but also in teaching [Core et al., 2006] and the game industry [Gebhard et al., 2008]. Chatbots are already particularly important in the economic domain [World Economic Forum, 2018]. As demand for a certain application grows, new instances of a single chatbot can be launched with small technical efforts, so that the chatbot can hold many parallel conversations at the same time (high scalability). This enables people to use freed capacities for more complex aspects of their work [Juniper Research, 2018; World Economic Forum, 2018]. The rapid progress in new technologies is also bringing about changes and new opportunities in health care in general and clinical psychology/psychotherapy in particular [Juniper Research, 2018; World Economic Forum, 2018].

Research interest in chatbots for use in clinical psychology and psychotherapy is growing by leaps and bounds, as can be seen by the increasing number of (pilot) studies in this area [Dale, 2016; Brandtzaeg and Følstad, 2017], as well as the growing number of online services offered by health care providers (e.g., health apps with chat support).

Chatbots can be systematized with regard to (1) areas of application, (2) underlying clinical-psychological/psychotherapeutic approaches, (3) performance of the chatbot, (4) goals and endpoints, and (5) technical implementation (Fig. 1).

Characteristics of chatbots in clinical psychology and psychotherapy. AIML, artificial markup language.

Characteristics of chatbots in clinical psychology and psychotherapy. AIML, artificial markup language.

Areas of Application

Promising areas for the use of chatbots in the psychotherapeutic context could be support for the prevention, treatment, and follow-up/relapse prevention of psychological problems and mental disorders [Huang et al., 2015; D'Alfonso et al., 2017; Bird et al., 2018]. They could be used preventively in the future, for example for suicide prevention [Martínez-Miranda, 2017]. Current research shows that suicidal ideation and/or suicidal behavior among users of social media, for example, can be detected with automated procedures [De Choudhury et al., 2016]. Then chatbots could, for example, automatically inform users of nearby psychological/psychiatric services. In the treatment of psychological problems, they might offer tools that participants could work with on their own. After the completion of classical psychotherapy, chatbots might be offered in the future to stabilize intervention effects, facilitate the transfer of the therapeutic content into daily life, and reduce the likelihood of relapse [D’Alfonso et al., 2017].

Clinical-Psychological/Psychotherapeutic Approaches

The scripts used by chatbots to address clinical-psychological issues should be based on well-evaluated principles of classical face-to-face psychotherapy (e.g., cognitive behavioral therapy). On the basis of such evidence-based scripts, within which the chatbot program follows options for action (e.g., using if-then rules), conversations can be held that are similar to therapeutic discussions [Dowling and Rickwood, 2013; D'Alfonso et al., 2017; Becker, 2018]. It is becoming clear from the research on IMIs that all approaches covered by the German Psychotherapy Guidelines, such as psychodynamic or behavioral therapy, can be suitable for translation into an online format [Paganini et al., 2016]. The approaches of interpersonal therapy, acceptance and commitment therapy, and mindfulness-based therapy have also been translated into online services and evaluated for use in either guided or unguided self-help interventions [Paganini et al., 2016].

Performance of the Chatbot

The effectiveness of the chatbot may vary depending on the modality in which the conversation occurs. There are text-basedchatbots (often referred to in the literature as conversational agentsor chatbots) and chatbots that use nat-ural-language, speech-basedinterfaces in dialogue systems (such as Apple's Siri, Amazon's Alexa, Microsoft's Cortana, and Google's Allo) [Bertolucci and Watson, 2016]. From a technical point of view, speech-based chatbots are text-based chatbots that also have functions for speech recognition and speech synthesis (machine reading aloud).

The simpler chatbots are based mainly on recognizing certain key terms with which to steer a conversation. More powerful chatbots can analyze user input and communication patterns more comprehensively, thus responding in a more precise way and deriving contextual information, such as users' emotions [Bickmore et al., 2005a,b].

Relationalchatbots, sometimes referred to as contextualchatbots, simulate human capabilities, including social, emotional, and relational dimensions of natural conversations [Bickmore, 2010]. Computer-generated characters, so-called avatars, are often used in the design of a chatbot identity; these mimic the key attributes of human conversations and are often studied under the label embodied conversational agentsin the literature[Beun et al., 2003; Provoost et al., 2017]. The more mental attributes the chatbot has, the greater its similarity to humans (anthropomorphism) [Zanbaka et al., 2006]. Anthropomorphism refers to the extent to which the chatbot can imitate behavioral attributes of a therapist [Zanbaka et al., 2006].

In the psychotherapeutic context of promoting mental health, social attributes [Krämer et al., 2018] and the ability of the chatbot to express empathy appear to be important factors in fostering a viable basis for interaction between a person and a chatbot [Bickmore et al. 2005a; Morris et al., 2018; Brixey and Novick, 2019].

Goals and Endpoints

Chatbots could take over time-consuming psychotherapeutic interventions that do not require more complex therapeutic competences [Fitzpatrick et al., 2017]. These are often investigated under the designation microintervention.Examples of microinterventions that do not need a great deal of therapist contact and can be initiated and guided by chatbots include psychoeducation, goal-setting conversations, and behavioral activation [Fitzpatrick et al., 2017; Stieger et al., 2018]. An example of a paradigm that is currently receiving much attention in this research context is therapeutic writing [Tielman et al., 2017; Bendig et al., 2018; Ho et al., 2018]. In the future, chatbots may have the potential to convey therapeutic content [Ly et al., 2017] and to mirror therapeutic processes [Fitzpatrick et al., 2017]. Combined with linguistic analyses such as sentiment analysis (a method for detecting moods), chatbots would be able to react to the mood of the users. This allows the selection of emotion-dependent response options and thematization of content adapted to the user's input [Ly et al., 2017] or the forwarding of relevant information about psychological variables to the practitioner. Regarding the possibilities that may emerge for psychotherapy, it is relevant to take up the current state of chatbot research in this context.

Technical Implementation

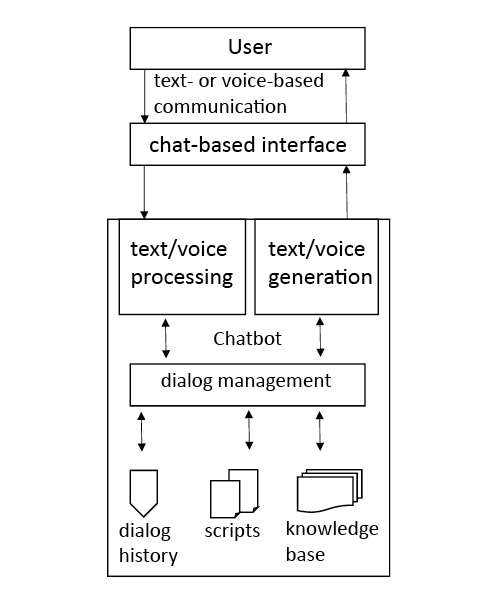

From a technical point of view, chatbots comprise various internal subcomponents [Gregori, 2017]. The component for recognizing information from the messages of the conversation partner is elementary. First and foremost, the intentions and relevant entities must be identified from the statements of the interlocutor. This is done using natural language processing (NLP), which is the machine processing of natural language using statistical algorithms. NLP translates the user's input into a language that the chatbot can understand (Fig. 2). The identified user intentions and entities, in turn, serve as the foundation for the appropriate response from the chatbot based on its components for dialogue management and response generation. Response generation is based, for example, on stored scripts, a predetermined dialogue, and/or an integrated knowledge base. The answer is made available to users via the chat-based interface, such as an Internet intervention or an app (Fig. 2).

Chatbots vary in their complexity of interaction.Simpler dialogue components are based exclusively on rule-based sequences (such as if-then rules). Conversations are modeled as a network of possible states. Inputs trigger the state transitions and associated responses of the bot, analogous to stimulus-response systems [Storp, 2002]. The chatbot internally follows a predefined decision tree [Chowdhury, 2003; Smola et al., 2008] (illustred in Fig. 3). The more complex chatbots also use knowledge bases or models of machine learning to construct their dialogues and AI to generate possible answers and to enhance the conversational proficiency of the bot (often called mental capacitiesin the research literature) [Lortie and Guitton, 2011; Ireland et al., 2016]. There are dedicated programming languages for programming chatbot dialogues. Artificial markup language (AIML; http://alicebot.wikidot.com/learn-aiml) has become particularly well established [Klopfenstein et al., 2017].

Simplified, schematic representation of a dialogue with the chatbot SISU [Bendig, Erb, Meißner, 2018].

Simplified, schematic representation of a dialogue with the chatbot SISU [Bendig, Erb, Meißner, 2018].

Classification of the Topic in the “Next-Generation” Clinical-Psychological/Psychotherapeutic Interventions

Through the advance of technology, online services are gaining in importance for classical psychotherapy [World Economic Forum, 2018]. Hybrid forms of online and offline psychotherapy are being investigated, the so-called blended care approaches [Baumeister et al., 2018]. Another area of research is stepped-care approaches, in which online services represent a low-threshold first step in a tiered supply model [Bower and Gilbody, 2005; Domhardt and Baumeister, 2018]. In the “next-generation” IMIs, chatbots could become increasingly important, especially as a natural interaction interface between users and innovative technology-driven forms of therapy. In the near future, chatbots will at first mainly conduct dialogues based on scripts created by psychotherapists [Dowling and Rickwood, 2013; Becker, 2018]. The dialogues can be of varying complexity depending on the content and objective. ELIZA [Weizenbaum, 1966], the first chatbot, simulated a psychotherapeutic conversation using a trivial script, resulting in a dialogue with users by means of set queries based on the user's inputs [Gesprächspsychotherapie nach Rogers, 1957]. The SISU chatbot simulates a conversation modeled on the paradigm of therapeutic writing, with elements of acceptance and commitment therapy, which instruct participants to write about important life events [Bendig et al., 2018]. An example of such a dialogue is shown in Figure 3.

Next, we present the current state of research on chatbots that are based on a psychological/psychotherapeutic evidence-based script aimed at reducing the symptoms of psychological illness and improving mental well-being – that is, promoting mental health.

Method

The present article is a scoping review of chatbots in clinical psychology and psychotherapy. This method is used for quick identification of research findings in a specific field, as well as summarizing and disseminating these findings [Peterson et al., 2017]. Scoping reviews are particularly suitable for presentation of complex topics, as well as for generating research desiderata. The objective of the review was defined, the inclusion and exclusion criteria were set, the relevant studies were searched, and titles, abstracts, and full texts were screened (E.B. and L.S.-T.). The generalizability of the research results is not a systematic goal of the present study [Moher et al., 2015; Peterson et al., 2017].

Inclusion and Exclusion Criteria

Studies that utilized chatbots to promote mental health were included. Only chatbots based on evidence-based clinical-psychological/psychotherapeutic scripts were considered. Thus, the only studies that were included used chatbots based on principles that are judged positively in classical face-to-face psychotherapy (e.g., principles and techniques covered by the psychotherapy guidelines). Populations both with and without mental and/or chronic physical conditions were included. Only those studies were included that examined the psychological variables of depression, anxiety, stress, or psychological well-being as primary or secondary endpoints. Other inclusion criteria were adult age (age 18+) and complete automation of the investigated (micro)interventions. The latter criterion excluded so-called Wizard-of-Oz studies, in which a person, such as a member of the research team, pretends to be a chatbot. Primary research findings were included, as well as study protocols that were generated in the context of, for example, randomized controlled (pilot) studies, experimental designs, or pre-post studies.

Search Strategy

To find suitable studies, an iterative process of keyword search and preliminary data analysis in March 2018 led to a final, systematic search in the databases PsycArticles, All EBM Reviews, Ovid MEDLINE®, Embase, PsycINFO, Cochrane databases, and PsyINDEX, using the search string: chatterbot OR chatbot OR social bot OR conversational agent OR softbot OR virtual agent OR software agent OR conversational agent OR automated agent AND (psych* OR counseling OR mental health OR psychotherapy OR therap* OR mental* OR clinical psychology). The hits were independently screened by 2 authors (E.B. and L.S.-T.); 148 duplicate-adjusted articles were found, which were then reduced in accordance with the search criteria in 2 rounds by title and abstract screening to 17 full texts (E.B. and L.S.-T.). Studies in which there was disagreement regarding inclusion/exclusion criteria were discussed (E.B. and L.S.-T.), resulting in 6 finally included articles. The search process is depicted in Figure 4.

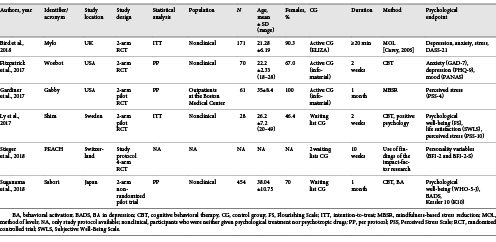

Findings: General Characteristics of the Included Studies

Table 1provides an overview of the study characteristics. All studies were published in the last 8 years, with half of them coming from the USA. The sample size varied between N= 28 and N= 496, with a mean of 156.8 (SD = 174.52). Participants were adults (mean age = 28.54 years, SD = 5.48), and 50% of the studies included students. They were either experiencing a problem that was causing distress [Bird et al., 2018], displaying symptoms of anxiety and depression [Fitzpatrick et al., 2017], or participants who had not been screened for psychological issues before study inclusion [Ly et al., 2017; Suganuma et al., 2018]. In the study by Gardiner et al. [2017], patients from outpatient clinics were included, while in the study by Suganuma et al. [2018], working people, students, and housewives were included (Table 1). Chatbots that are essentially based on cognitive-behavioral scripts have been the most frequently studied (k = 5). Newer approaches from the third wave of behavioral therapy (such as mindfulness-based stress reduction) were also used (k = 1). The most commonly studied endpoints were depression, anxiety, mental well-being, and stress. The majority of participants in all the studies were female (>50%) and from nonclinical populations, with the largest proportion being students. The studies are mostly so-called feasibility or pilot studies, with the objective to assess acceptance and make an initial assessment of effectiveness (practicability).

Evidence about Chatbots to Promote Mental Health

The MYLO chatbot (Manage Your Life Online, available at https://manageyourlifeonline.org) offers a self-help program for problem solving when a person is in distress [Gaffney et al., 2014]. The MYLO script is based on method-of-level therapy [Carey, 2006]. In a randomized controlled trial, participants were assigned either to the ELIZA [Weizenbaum, 1966] program, based on Rogerian client-centered therapy, or to the MYLO program [Bird et al., 2018]. Both chatbots impart problem-solving strategies and guide the user to focus on a specific problem. Through targeted questions, participants are encouraged to approach a circumscribed problem area from different perspectives. One objective of the study was to evaluate the effectiveness of MYLO in reducing problem-related distress compared to the active control group that used ELIZA. Self-reported distress improved significantly in both groups (F2, 338 = 51.10, p< 0.001, η2 = 0.23) [Bird et al., 2018]. MYLO was not superior to ELIZA in this regard, but participants rated MYLO subjectively as more helpful. This replicates the results of a smaller laboratory study on MYLO, which followed the same structure [Gaffney et al., 2014].

The WOEBOT chatbot offers a self-help program to reduce anxiety and depression [Fitzpatrick et al., 2017], with a script based on cognitive-behavioral principles. The aim of the study was to evaluate the feasibility, acceptability, and effectiveness of WOEBOT in a nonclinical sample (N= 70): 34 participants were randomized to the experimental group (EG), and 36 were assigned to an active control group (CG) (an eBook on depression). The authors reported a significant reduction in depressive symptoms (PHQ-8) [Kroenke et al., 2009] in EG (F1, 48 = 6.03, p= 0.017). With regard to anxiety symptoms, a significant decrease was observed in both groups, but the groups did not differ significantly from each other (p= 0.58) [Fitzpatrick et al., 2017]. In terms of feasibility and acceptance, chatbot users were overall significantly more satisfied than the participants in the active CG as well as with respect to the content [Fitzpatrick et al., 2017].

The SHIM chatbot is a self-help program to improve mental well-being [Ly et al., 2017]. The SHIM script is based on principles of cognitive-behavioral therapy as well as elements of positive psychology. The aim of the randomized controlled pilot study was to evaluate the effectiveness of SHIM and the adherence of the 28 participants: 14 in the EG compared to 14 in the waiting list CG (nonclinical sample). Intention-to-treat analyses revealed no significant differences between the groups regarding psychological well-being (Flourishing Scale [Diener et al., 2010]), Subjective Well-Being Scale [Diener et al., 1985]), and stress (Perceived Stress Scale [PSS-10] [Cohen et al., 1983]), while adherence to completion of the intervention was high (n= 13 in the EG, n= 14 in the waiting list CG), which speaks to the practicability of the chatbot. Completer analyses revealed significant effects for psychological well-being (Flourishing Scale) (F1, 27 = 5.12, p= 0.032) and perceived stress (PSS-10) (F1, 27 = 4.30, p= 0.048).

The SABORI chatbot was studied in a nonrandomized prospective pilot study [Suganuma et al., 2018]. SABORI offers a preventive self-help program for the promotion of mental health. The underlying script is based on principles of cognitive behavioral therapy and behavioral activation. Psychological well-being (WHO-5-Japanese [Psychiatric RU and Psychiatric CNZ, 2002]), psychological distress (Kessler 10, Japanese version [Kessler et al., 2002]), as well as behavioral activation (Behavioral Activation for Depression Scale [Kanter et al., 2009]) were compared for 191 participants in the EG versus 263 participants in the CG. Analyses of the data showed significant effects for all outcome variables (all p< 0.05), which suggests that the chatbot was practicable as a prevention program for the sample.

The GABBY chatbot was studied in a randomized controlled pilot study [Gardiner et al., 2017]. This self-help program is a comprehensive program for behavioral changes and stress management. The underlying stress reduction script is based on the principles of mindfulness-based stress reduction [Gardiner et al., 2013]. Unlike the EG (n= 31), the active CG (n= 30) received the contents of GABBY only as informational material (the contents of GABBY in written form + accompanying meditation exercises on CD/MP3). The findings showed no significant difference between the groups in terms of perceived stress (p> 0.05), but there was a difference, for example, in stress-related alcohol consumption (p= 0.03). The results suggested the practicability of GABBY regarding the primary endpoints of feasibility (e.g., adherence, satisfaction, and proportion of participants belonging to an ethnic minority).

PEACH, a chatbot that is currently undergoing a randomized controlled trial [Stieger et al., 2018], is geared towards personality coaching. Based on research findings about mechanisms of action in psychotherapy, scripts were compiled for the microinterventions presented by PEACH. Among the things covered are the development of change motivation, psychoeducation, behavioral activation, self-reflection, and resource activation. Measures for acceptance and feasibility are explicitly considered in this study.

Some of the studies that did not fulfill the inclusion criteria of this review and were excluded from the full-text review (n= 11) nevertheless suggest further promising fields, which are yet to be developed in a broader context (health care in general) (Table 2).

Discussion

The present scoping review illustrates the growing attention being paid to psychological-psychotherapeutic (micro)interventions mediated via chatbot. The technology of chatbots can generally still be described as experimental. These are pilot studies, and all 6 studies were mainly concerned with evaluating the feasibility and acceptance of these chatbots. The findings suggest the practicability, feasibility, and acceptance of using chatbots to promote mental health. Participants seem to benefit from the content provided by the chatbot for psychological variables such as well-being, stress, and depression. In regard to evaluating effectiveness, it should be noted as a limitation that the samples are often very small and lack sufficient statistical power for a high-quality effectiveness assessment. The majority of the studies were published during the past 2 years, underlining the current relevance of the topic and predicting a substantial increase in research activity in this area over the coming years.

The first trials of chatbots for the treatment of mental health problems and mental disorders have already been done, for example the study by Gaffney et al. [2014], which used chatbots to help resolve problems caused by distress, as well as the study by Ly et al. [2017], in which chatbots were used to improve well-being and reduce perceived stress. Fitzpatrick et al. [2017], who used chatbots in a self-help program for students combating depression and anxiety disorders, were also able to support these findings with their study.

The descriptions of the technical background of the chatbots used, as well as more detailed descriptions of the content conveyed by the chatbots, are altogether too brief to assess their objective comprehensibility and to enable replication of the results. Since many startups are currently being created worldwide that use chatbots for clinical psychology and psychotherapy, commercial conflicts of interest are also an issue for the research teams or their clients (e.g., SHIM).

Opportunities, Challenges, and Limitations for Clinical Psychology and Psychotherapy

Evidence-based research in this field is still quite rare and qualitatively heterogeneous, which is why the actual influence of chatbots in psychotherapy cannot yet be reliably estimated. The rapid growth of technology has left ethical considerations and the development of necessary security procedures and framework conditions short of a desirable minimum.

An example is that many health-related chatbots are on the market that have not been empirically validated (e.g., TESS, LARK, WYSA, and FLORENCE). There are also many hybrid forms of chatbots and other therapeutic online tools. JOYABLE (https://joyable.com/) and TALK SPACE (https://www.talkspace.com/), for example, are commercial chatbots that are offered in combination with online sessions with a therapist.

In the current public perception, the topic of new technologies in the field of mental health is fraught with reservations and fears [DGPT, 2018; World Economic Forum, 2018]. It is important to keep informed about developments in this area to ensure that future technologies do not find their way into health care through the efforts of technologically and commercially driven interests. Rather, the effectiveness, but also the security and acceptance of new technologies, should be decisive for their implementation in our health care system. Serious considerations of the potential benefits and hazards of using chatbots are among the hitherto largely neglected topics. Questions regarding data protection and privacy attract particular attention here, given the very intimate data that users under stress may share too carelessly with dubious providers. Storage, safeguarding, and potential further processing, as well as further use, require urgent regulation to prevent misuse and to ensure the best-possible security for participants. Questions also arise about the susceptibility of participants to “therapeutic” advice from chatbots whose algorithms may be nontransparent. This pertains both to the danger of potential technical sources of error that may cause harmful chatbot comments and to the targeted use of the chatbots for purposes other than the well-being of the users (e.g., solicitation/in-app purchase options for other products). Finally, there is not only a need for more effective chatbots but also for chatbots with fewer undesired side effects. This refers especially to the potential of chatbots to respond appropriately in situations of crisis (e.g., suicidal communication).

Potential Opportunities and Advantages of Using Chatbots

Chatbots could be seen as an opportunity to further develop the possibilities offered by psychotherapy [Feijt et al., 2018]. An important aspect of the role of chatbots in clinical care is to address people in need of treatment who are not receiving any treatment at all because of various barriers [Stieger et al., 2018]. Chatbots could bridge the waiting time before approval of psychotherapy and provide low-threshold access to care [Grünzig et al., 2018]. Psychiatric problems or illnesses could be addressed by chatbot interventions in the future, whereby at least an improvement in symptoms could be achieved compared to no treatment at all [Andersson et al., 2016; Carlbring et al., 2018]. Chatbots can also offer new opportunities for accessibility and responsiveness: People with a physical illness and depressive symptoms, for example, could interact in the future with the chatbot when they are being discharged from the hospital to receive psychoeducation and additional assistance relating to the psychological aspects of their illness [Bickmore et al., 2010b].

Another important application for chatbots is as an adjunct to psychotherapy. Chatbots could increase treatment success by increasing adherence to cognitive-behavioral homework. It is conceivable that by adopting microinterventions that require little therapist contact (such as anamnesis or psychoeducation), the therapists will have time to see more patients or to give more intensive care [Feijt et al., 2018]. Chatbots could also be used to improve the communication of therapeutic content [Feijt et al., 2018].

To advance research in this area, piloted chatbots should be studied in clinical populations. The content implemented to date is expandable (Table 1). To actually be a useful supplement to clinical psychological/psychotherapeutic services, substantial research seems necessary on the conceptual side as well. This applies, among other things, to the development of a robust database of psychotherapeutic conversational interactions, which could allow chatbots, drawing upon such elaborated databases, to achieve more effective interventions than they do at the present time. In addition, issues such as acceptance, sustainability, and especially security, as well as subsequent tests of the technology are corrective elements that, amid the prevailing euphoria over digitalization, should become an integral part of future scientific funding programs.

Potential Challenges and Limitations of the Use of Chatbots

Concerning the use of chatbots to promote mental health, numerous ethical and legal (data protection) questions have to be answered. Studies on the anthropomorphization of technology (see section above Performance of the Chatbot) show that users quickly endow technical systems with human-like attributes [Cristea et al., 2013]. It will have to be clarified whether dangers may arise if users take a chatbot to be a real person, and the content expressed overwhelms the capabilities of the chatbot (for example, an unclear statement of suicidal thoughts).

In terms of the data protection law, it must be recognized that some of the conversational content is highly sensitive. In the development of new technological applications in general and chatbots in particular, it is important to systematically consider aspects of data security and confidentiality of communications from the outset. The context of mental health makes the requirements of data privacy and the security of the chatbot particularly essential. Quality criteria should be developed to identify evidence-based chatbots and to distinguish them from the multitude of unverified programs. New legislation that deals with this topic is needed to regulate the use of chatbots promoting mental health and to protect private content [Stiefel, 2018].

The German and European legal systems have a lack of quality criteria in regard to the implementation of IMIs in general and chatbots in particular. It is often difficult to differentiate between applications that are relevant to therapy and those that are not [Rubeis and Steger, 2019]. The certification of therapy-relevant applications as a medical product could resolve this problem in the future. Medical devices receive a CE (Conformité Européenne) label. This certificate could allow therapy-relevant chatbot applications to be distinguished from those that promise no clinical benefit or display low user security [Rubeis and Steger, 2019].

Future Prospects and Research Gaps

The findings of the present review suggest that chatbots may potentially be used in the future to promote mental health in relation to psychological issues. A review of IMIs from an ethical perspective by Rubeis and Steger [2019] affirms the potentially positive effects on users' well-being, the low risk, the potential for equitable care for mental disorders, and greater self-determination for those affected [Rubeis and Steger, 2019]. In light of the limitations up to now respecting the generalizability and applicability of chatbots in psychotherapy, the present findings should be interpreted with caution. The findings of yet-to-be-performed randomized controlled trials of chatbots in the mental health context may, in the future, be integrated into the picture of research on psychological Internet- and mobile-based interventions. IMIs that promote mental health, such as to combat depression, have already proven effective [Karyotaki et al., 2017; Königbauer et al., 2017]. Some of the studies that did not meet the inclusion criteria of the present review and were excluded from the full-text review (n = 11) suggest promising fields that are yet to be developed in a broader context (health care in general) [Bickmore et al., 2010a, 2010b, 2013; Hudlicka, 2013; Ireland et al., 2016; Sebastian and Richards, 2017]. This applies, for example, to studies using chatbots to increase medication adherence [Bickmore et al., 2010b; Fadhil, 2018] and the study by DeVault et al. [2014], which used semistructured interviews to diagnose mental health problems (Table 2).

Further research is needed to improve the psychotherapeutic content of chatbots and to investigate their usefulness through clinical trials. The studies often originate from authors who mainly approach psychological issues (such as depression) from a background in information technology and begin to collaborate with clinical-psychological scientists after developing their chatbots (bottom-up). There is thus a paucity of theoretical work that initially evaluates what is needed in the clinical-psychological context (top-down). Using such analyses, assessments could be made about how certain psychological endpoints should be achieved and why.

There is a need for research into different forms of use (e.g., as an accompaniment to therapy vs. as a stand-alone intervention). Needs analyses could substantially enhance the research literature on the development of chatbots for use in therapy. What do therapists want? How can chatbots help to make therapists feel effectively supported by the application? It should also be examined whether chatbots can increase overall therapeutic success and whether, for example, they prove to be effective after the conclusion of psychotherapy to stabilize the treatment effects. It still seems to be an open question, just what the specific target groups require of the interface. Some studies deal with the question of which aspects of the design might be chosen for older people [Kowatsch et al., 2017] or for people with cognitive impairment [Baldauf et al., 2018]. It is important that the development of chatbots in clinical psychology and psychotherapy takes places within a protected and guideline-supported framework. Answering ethical questions about the information provided to people who live with a mental disorder is another priority task [Rubeis and Steger, 2019].

The review presents points of departure for future research in this field. Besides the need for replication of the studies in a randomized controlled design, the investigation of piloted (i.e., already proven potentially effective) chatbot interventions in clinical populations is another area still to be explored. Given that none of the previous studies are based on a true clinical sample, the transferability of the results to the psychotherapeutic context remains in question. Psychotherapeutic processes are much more complex than the conversational logic of current chatbots can convey.

Acknowledgment

The study was translated into English by Susan Welsh.

Disclosure Statement

The authors declare that there are no conflicts of interest. The authors declare that the research occurred without any commercial or financial relationships that could potentially create a conflict of interest.

Funding Sources

There were no sources of funding for this study.

Author Contributions

The conceptualization and development of the manuscript was performed by E.B. B.E. and H.B. commented on the first draft. The comments were incorporated by E.B. The search and review of relevant literature were performed by E.B. and L.S.-T. Manuscript preparation and editing were performed by E.B., revision and finalization by E.B., B.E., and H.B.

![Simplified, schematic representation of a dialogue with the chatbot SISU [Bendig, Erb, Meißner, 2018].](https://karger.silverchair-cdn.com/karger/content_public/journal/ver/32/suppl.%201/10.1159_000501812/3/m_000501812_f03.jpeg?Expires=1725274068&Signature=V24B-Em05KhzxdtaOxC4im3zmDTklHpeMayckVja8WPkIGYJwIecigdQT9p~oMUX94Id8cZEmPPtaaVMz6sZdobld96VMJXnLnSc5Yu7j9HtqugpoE~aJkDI0D9wqWk2EMpMoN1WWVYdnGdVU8UsUdOIRhlUTV4chWHu52Wd5RrjjHnhAdlT8NtT6SK-w9-5cTCdmf0juNoVP2IX785DAf2jUVHCl52mTf5GVl7D7cGKTFUkdsICjSYy39GmAZL4U3TIjoCf4wW16ErD42wynONXxisCycCZdMqQjMCWScRRu9fF3Ghk8OFguC4ZrypeS4Of1enws5wfuVqTTqnFZw__&Key-Pair-Id=APKAIE5G5CRDK6RD3PGA)